Multilingual Voice AI: Deploy One Agent That Speaks Every Customer’s Language

Written by:

Rohan Chaturvedi

|

Last updated on:

April 20, 2026

|

Fact Checked by :

Rohan Chaturvedi

|

Last updated on:

April 20, 2026

|

Fact Checked by :

Namitha Sudhakar

|

According to: Editorial Policies

Namitha Sudhakar

|

According to: Editorial Policies

Too Long? Read This First

- Multilingual voice AI lets a single agent detect, respond, and switch languages in real time.

- The real cost of language-specific support isn't just headcount. It's duplicated tooling, fragmented brand voice, and a support architecture that breaks at the edges.

- Real-time language switching and pre-configured language selection are not the same thing. Only real-time switching gives you a single deployment that serves all markets simultaneously.

- WhatsApp-first businesses get an outsized advantage. Multilingual voice AI handles language detection in voice messages, not just text, making a single WhatsApp number viable across every market you serve.

- Voice cloning works well within language families today. True cross-lingual cloning is emerging, but it's not yet uniformly production-ready across all language pairs.

- Astra supports 30+ languages with automatic detection and real-time switching, deployable on WhatsApp and your website without an engineering team.

The businesses winning in multilingual markets stopped treating language as a routing problem and started identifying it as a conversation capability.

Every market you enter comes with its own language expectations.

Customers in Mexico expect Spanish, while those in the UAE expect Arabic. Customers in India may switch between Hindi and English mid-sentence and expect the agent on the other end to keep up.

The default architecture for this is fragmentation: a separate bot for each language, separate agent queues, separate QA processes, separate maintenance cycles.

It increases your operational cost, fragments your brand voice across languages, and breaks the moment a customer switches languages mid-call.

What is the solution here? A single multilingual voice AI agent can detect language in real time, respond fluently, and seamlessly switch between languages.

This guide explains how a multilingual voice AI agent works, where it delivers the most impact, and how to deploy one without an engineering team.

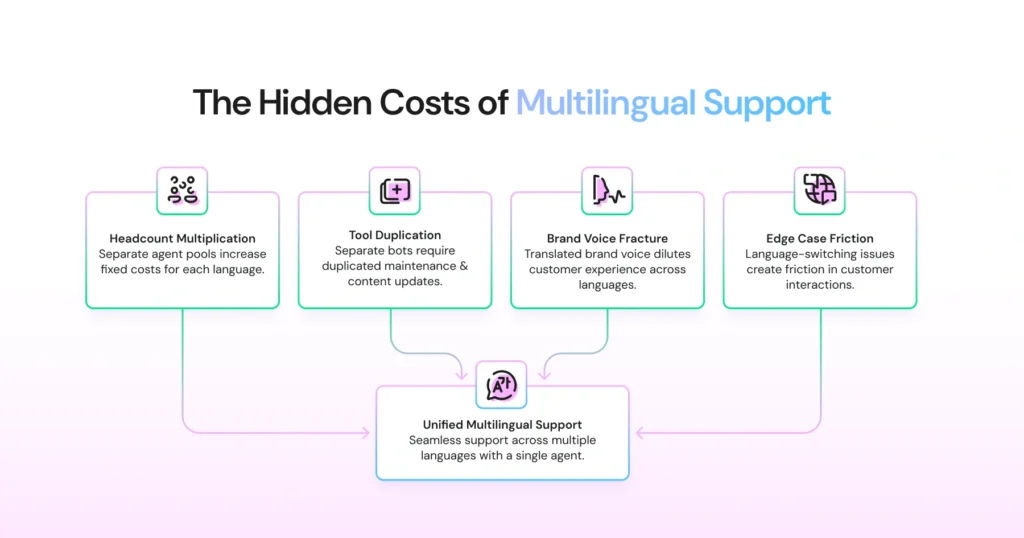

The Hidden Cost of Language-Specific Customer Support

Running a separate support infrastructure per language feels like a solved problem until you add up what it actually costs.

Headcount Multiplies with Every Language You Add

A business serving English, Spanish, and Arabic-speaking customers typically maintains three distinct agent pools: hired, trained, and scheduled separately.

When volume spikes in one language, you can’t redeploy agents from another. You’re not running one support operation, you’re running three, with all the fixed costs that implies.

Tooling Isn’t Shared, It’s Duplicated

When you have separate bots in different languages, you also need different maintenance cycles. Change your pricing or update a returns policy, and you’re not making one edit; you’re making one edit per language.

Each language maintenance requires a native speaker to review it before it goes live.

- Separate conversation flows to build and version

- Separate intent libraries to maintain

- Separate QA cycles every time your product or policy changes

- Native-speaker review is required as a mandatory gating step before any update goes live

Miss a single update, and a Spanish-speaking customer receives outdated information while the English version is already correct.

Brand Voice Fractures Across Languages

Your brand voice was defined once. By the time it reaches a third language, it is a copy of a copy. Customers in different markets are not getting your experience; they are getting a version of it. And there is no systematic way to close that gap.

Edge Cases Expose the Gaps Your Architecture Created

The real cost of language-specific support doesn’t show up in your tooling budget. It shows up the moment the system meets a real customer.

A caller opens in Spanish on your English line. A bilingual shopper switches languages mid-conversation. An agent puts them on hold, not because the product failed, but because the architecture was never built to handle this.

Multilingual voice AI removes the architecture that creates them, not by translating the experience, but by making language-switching a native capability of a single agent from the start.

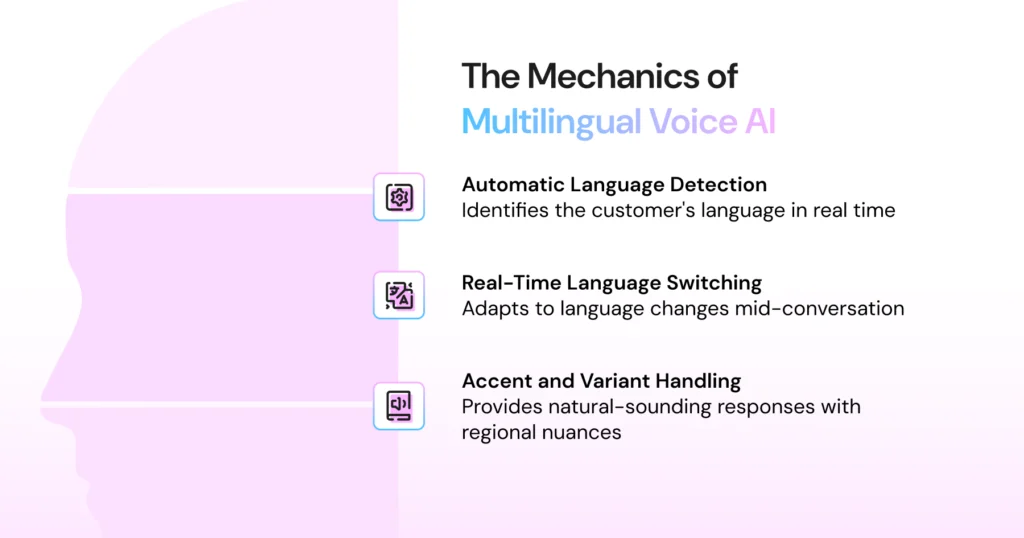

How Multilingual Voice AI Works: Language Detection to Language Switching

Most businesses build multilingual support the same way: a language menu at the start, a separate bot behind each option, and a customer who has to declare their preference before the conversation even begins.

That is not how language actually works. People don’t select a language. They just speak. Multilingual voice AI is built around that reality.

The moment a customer says their first word, the agent already knows what language to respond in, no menu, no selection, no configuration required on either side.

Here is what that looks like under the hood.

Step 1: Language Detection at the First Utterance

Think about how your best multilingual employee handles a call. They hear the first few words, instantly know what language to respond in, and get on with the conversation, unlike other support systems, no menu, no clarifying question, no awkward pause.

Astra works the same way. The moment a customer speaks, the agent identifies the language and responds in it, typically within the first two or three words. It does not wait for a full sentence and does not ask for a preference. It just replies.

This works across accents and regional variants, too. A customer speaking Mexican Spanish and one speaking Castilian Spanish both get a response in Spanish. A Gulf Arabic speaker and a Levantine Arabic speaker are both considered Arabic speakers.

The agent handles the distinction automatically; your customer never has to flag it, explain it, or repeat themselves.

Step 2: Real-Time Language Switching Mid-Conversation

Bilingual customers switch languages naturally. A Hindi-speaking customer might open in Hindi and shift to English when describing a technical issue.

A Spanish-English speaker might toggle between both depending on what’s easier to express in the moment.

A multilingual voice AI handles this without interruption. When the STT model detects a language shift, the agent switches its response language to match. This means no hold music, no transfer, no “let me connect you with someone who speaks English.”

The conversation continues as if the switch never happened.

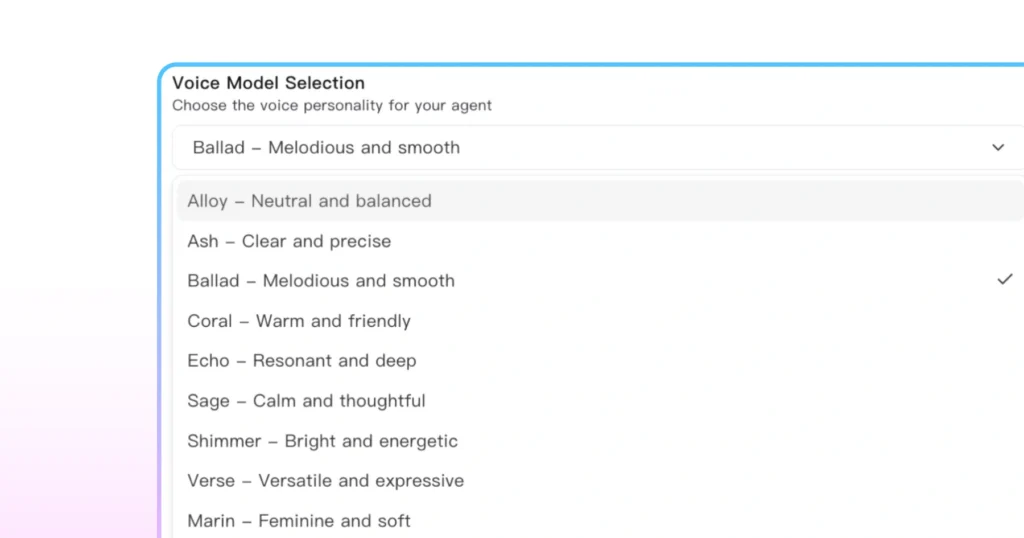

Step 3: Accent and Variant Handling

Language detection is one capability; natural-sounding responses are another. The best multilingual voice AI systems don’t just respond in the right language. They respond in the right accent and regional variant.

A customer in Colombia shouldn’t hear a response that sounds like it was trained exclusively on Madrid Spanish. Accent selection is a function of both the detected language variant and, where configurable, the target market.

| What this means in practice A single multilingual voice AI agent can handle an English call, a Spanish call, and an Arabic call in sequence or a single call that moves between all three without any reconfiguration between them. The agent serving your Mexico City customers is the same agent serving your Dubai customers. One deployment. One conversation flow. One QA process. |

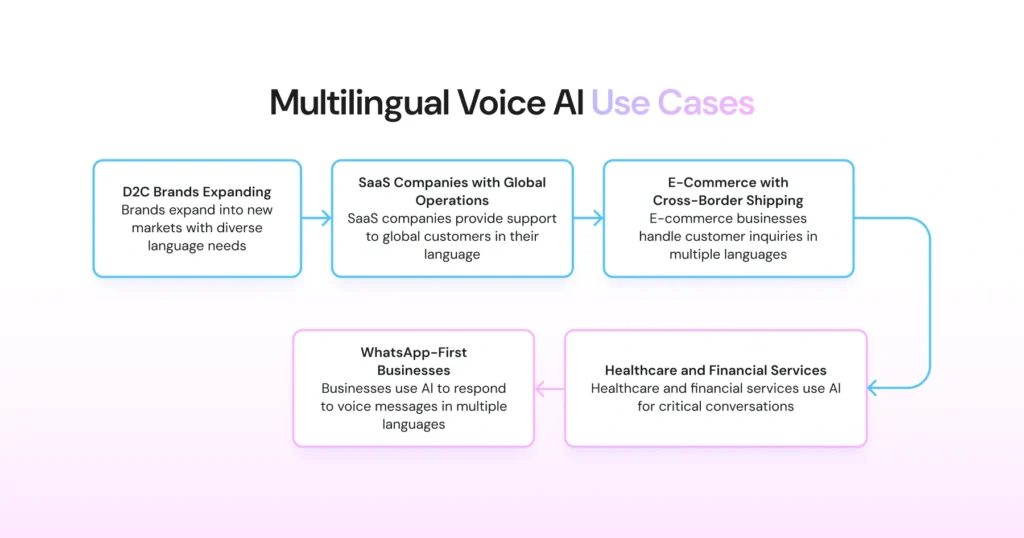

5 High-Impact Use Cases for Multilingual Voice AI

Multilingual voice AI delivers value wherever language barriers create support costs or conversion drop-off. However, the impact is sharpest in five specific contexts.

1. D2C Brands Expanding into LATAM, the Middle East, and Southeast Asia

Cross-border D2C growth stalls when customer support can’t keep pace with new markets. A brand that ships to Mexico, Colombia, and Chile is effectively serving three Spanish-language markets with regional vocabulary differences, plus its English-speaking customer base.

Multilingual voice AI lets a single agent handle the full language spread of a LATAM expansion without standing up new support infrastructure per country.

The same applies in Southeast Asia, where a single market like Malaysia or Singapore may require support for English, Malay, and Mandarin within the same customer base.

2. SaaS Companies with Global Customer Success Operations

Enterprise SaaS customers expect high-touch support in their language, not translated documentation and an English-only success team.

For SaaS companies managing accounts across Europe, the Middle East, and Asia, multilingual voice AI extends the reach of a lean CS team without the headcount cost of hiring language-specific customer success managers for every region.

Onboarding calls, renewal conversations, and escalation handling can be handled by a single multilingual agent, with the same conversation flow and quality standards across all languages.

3. E-Commerce with Cross-Border Shipping

Order status, returns, and delivery exceptions are high-volume, time-sensitive inquiries that require immediate answers in the customer’s language.

For e-commerce operators shipping across language borders, a multilingual voice AI handles the entire post-purchase support loop without routing customers through language-specific queues that add wait time and operational overhead.

4. Healthcare and Financial Services in Immigrant-Dense Markets

Some customer conversations leave no room for confusion. A patient asking about medication or a customer disputing a financial transaction needs to explain details clearly in a language they are fully comfortable with, not one they are struggling to navigate.

In these situations, a misunderstanding is more than a poor support experience. It can introduce compliance risks, safety concerns, and serious operational consequences.

For healthcare providers and financial institutions, multilingual voice AI is less about cost savings and more about reducing risk.

Supporting Spanish-speaking patients in the US, Arabic-speaking banking customers in Europe, or Hindi-speaking users across Gulf financial services ensures that language never becomes the reason a critical conversation fails.

5. WhatsApp-First Businesses Operating Across Multiple Countries

For businesses where WhatsApp is the primary support channel (common across LATAM, the Middle East, and South Asia), multilingual voice AI extends naturally to voice messages.

Customers who send voice notes in Arabic, Spanish, or Hindi get responses in kind, without the business needing to route those messages to language-specific agents.

This use case is covered in depth in the next section.

Why is WhatsApp + Multilingual Voice AI a Natural Fit?

WhatsApp has over 3 billion users globally, and the majority of them are not in English-speaking markets.

LATAM, the Middle East, South Asia, and Sub-Saharan Africa are WhatsApp-first regions, where customers default to WhatsApp for everything from customer service to purchase decisions.

For businesses operating in these markets, WhatsApp isn’t a secondary channel. It’s the primary one.

The challenge is that WhatsApp support at scale has traditionally required language-specific routing. A message in Arabic goes to the Arabic queue, and a voice note in Spanish goes to the Spanish queue.

The channel is ubiquitous; the infrastructure behind it is fragmented. Multilingual voice AI changes the equation, specifically because of how it handles voice messages.

Language Detection in Voice Messages

Text-based multilingual support is relatively straightforward: detect the script, route accordingly. Voice messages are harder. There’s no script to read.

The AI has to process compressed audio, account for accent variation, identify the language from speech alone, and do it fast enough that the response doesn’t feel delayed.

This is where a purpose-built multilingual voice AI separates itself from general-purpose chatbots retrofitted with translation layers.

When a customer in Riyadh sends a voice note in Arabic, or a customer in Sao Paulo sends one in Portuguese, the agent detects the language from the audio directly, constructs a response in that language, and replies without any human routing step in between.

What This Unlocks for Global Businesses

For a business running WhatsApp as its primary support channel across multiple countries, a multilingual voice AI agent means:

- One WhatsApp number serving every market, regardless of language

- Voice note support in every covered language, not just text

- Consistent response quality and brand voice across all language variants

- No manual routing, language-specific queues, or hold time while a bilingual agent becomes available

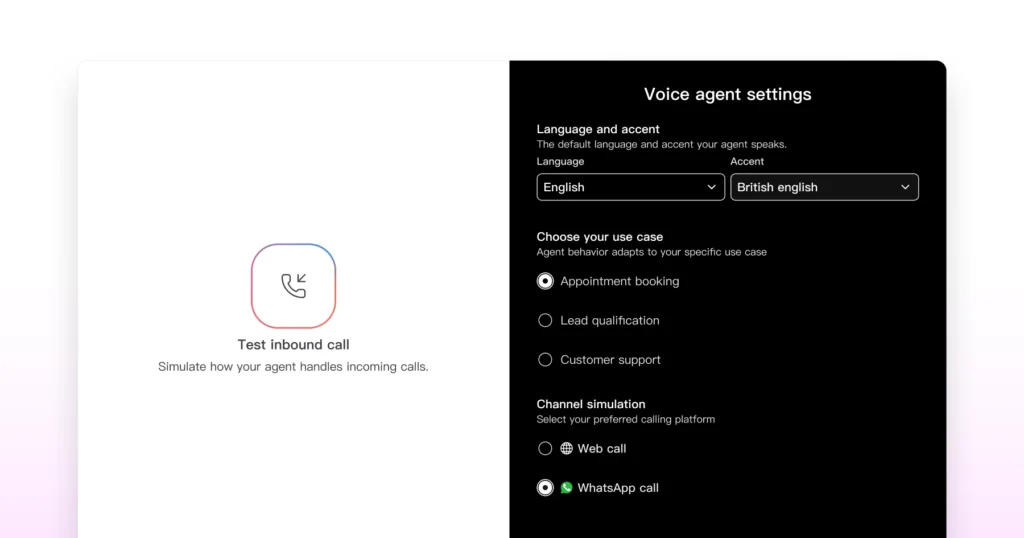

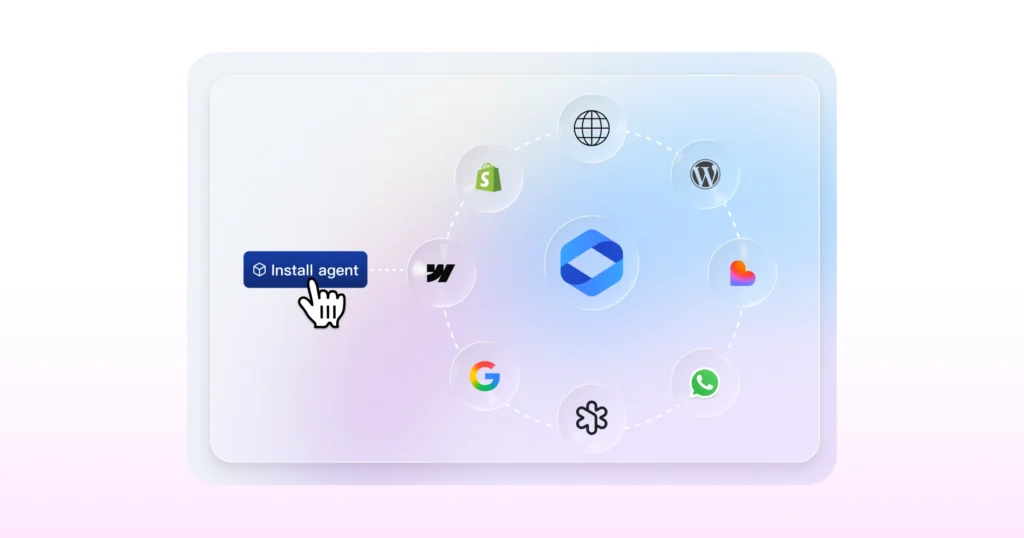

Astra Voice 2.0 on WhatsApp

Astra’s multilingual voice AI is deployable directly on WhatsApp, with automatic language detection across voice and text messages.

A single Astra deployment handles inbound conversations in any of its supported languages, detecting, responding to, and switching in real time across your entire WhatsApp customer base, regardless of which markets they’re in.

According to recent data, there are very few, if any, tools in the voice AI agent category that currently offer this combination of WhatsApp-native deployment and real-time multilingual voice handling.

For businesses that use WhatsApp as their channel, this capability makes a single-agent deployment viable at a global scale.

What to Look for When Evaluating Multilingual Voice AI Platforms?

Not all multilingual voice AI platforms are built the same way. The number of languages supported is the most visible differentiator, but it’s rarely the most important one.

Read on to understand what to look for when assessing whether a platform can actually serve your markets.

1. Number of Languages Supported

The floor question, but worth asking precisely. Some platforms advertise multilingual support but cover only the highest-volume European languages, such as English, Spanish, French, and German.

If your markets include Arabic, Hindi, Bahasa Indonesia, or Swahili, that coverage gap matters immediately.

Look beyond the headline number. Check whether the platform supports the specific language variants your customers speak: Mexican Spanish vs. Castilian Spanish, Gulf Arabic vs. Levantine Arabic, Brazilian Portuguese vs. European Portuguese.

A platform that lists “Spanish” as a supported language may perform well in Madrid and poorly in Monterrey.

2. Quality of Accent Handling Per Language

Language detection and accent handling are separate capabilities. A platform can accurately identify that a customer is speaking Arabic while still producing responses that sound unnatural to a Gulf Arabic speaker.

For customer-facing deployments, accent quality directly affects how the agent is received. A response in the wrong regional variant signals immediately that the system isn’t built for that market.

When evaluating platforms, test with native speakers from your actual target markets, not just in the language’s standardized form. The difference between passing a demo and passing a real customer interaction is often accent-deep.

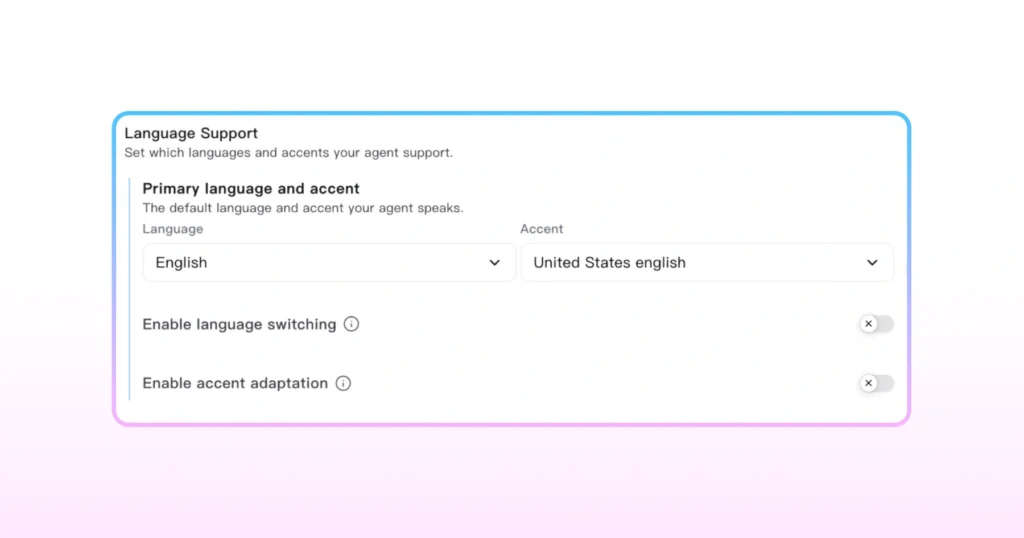

3. Real-Time Switching vs Pre-Configured Language Selection

This is the most important architectural distinction to probe. Two types of multilingual support exist in the market:

- Pre-configured language selection: The customer chooses a language at the start of the interaction, and the bot loads the appropriate version. This is not real-time multilingual AI; it’s multiple monolingual bots behind a language menu.

- Real-time language detection and switching: The agent detects language automatically from speech, responds in that language, and switches mid-conversation if the customer’s language changes. This is true multilingual voice AI.

The distinction matters operationally. Pre-configured selection still requires you to maintain separate conversation flows per language. Real-time switching means one flow handles all languages, which is where the maintenance and consistency benefits actually come from.

4. Fallback Behavior When a Language Isn’t Supported

Every platform has a coverage ceiling. What happens when a customer speaks a language outside the platform’s intended language set is a test of how well the platform was designed for real-world deployment.

The right fallback behavior depends on your operation, but the platform should give you control over it.

- Graceful acknowledgment in the detected language, where possible

- Escalation to a human agent with context passed through

- A configurable fallback language (typically English) with a clear handoff message

Platforms that simply fail silently, dropping into English with no acknowledgment, create a worse experience than no automation at all.

| Astra’s Language Coverage Astra Voice 2.0 supports 30 languages with automatic detection and real-time switching. Coverage includes the major language pairs relevant to global business operations like English, Spanish, Arabic, Hindi, Portuguese, French, Mandarin, and more, with accent handling tuned for regional variants, not just standardised language models.  For a full breakdown of supported languages and a comparison of how leading platforms approach multilingual support, see our Best voice AI agent comparison guide. |

The Brand Voice Challenge: Does Voice Cloning Work Across Languages?

If you’ve invested in a branded voice (a specific tone, cadence, and personality that represents your company in customer conversations), the natural question when deploying multilingual voice AI is whether that voice travels across languages.

Can you clone the voice of your English-speaking brand representative and deploy it for Spanish, Arabic, and Hindi conversations?

The honest answer is: partially, and it depends on what you mean by “voice.”

What Voice Cloning Currently Does Well

Voice cloning technology has matured significantly. For monolingual deployments, it’s possible to capture a speaker’s vocal characteristics like pitch, pacing, timbre, intonation patterns, and reproduce them convincingly in a synthetic voice that sounds like a specific person or persona.

For multilingual deployments in the same language family, the results are increasingly strong. A cloned English voice, adapted for Australian, British, and American English variants, will sound consistent across all three.

A Spanish voice cloned for Mexican and Colombian Spanish will carry recognizably similar characteristics.

Where Cross-Lingual Cloning Gets Harder

The challenge appears when you move across languages. For example, cloning an English voice and using it for Arabic or Hindi conversations.

Language is more than vocabulary and grammar. Every language has its own rhythm, tone, and sound patterns. A voice trained on English speech does not naturally carry those patterns into Arabic or Hindi.

The result may still be understandable, but it often sounds slightly unnatural. Customers usually notice this, even if they cannot clearly explain why.

Most voice cloning systems today are monolingual by design. They clone within a language, not across languages.

Cross-lingual voice cloning aims to maintain a speaker’s voice consistency across language switches. While the technology is advancing quickly, it still isn’t production-ready for every language combination.

What This Means for Your Deployment

In practice, most businesses deploying multilingual voice AI make one of two choices.

- Use a consistent synthetic persona: A voice designed to sound coherent and on-brand across all supported languages, without being a clone of a specific person. This is the most reliable approach today and still allows for significant brand voice customisation in terms of tone, pacing, and personality.

- Use language-specific voices within a consistent framework: Different voices per language, but governed by the same brand guidelines for tone and register. This sacrifices voice consistency for naturalness, which is often the right trade-off when serving markets where accent authenticity matters more than voice uniformity.

Cross-lingual cloning, where a single cloned voice is deployed fluently across all languages, is the direction the technology is heading.

For some language pairs, particularly within the same language family, it’s viable today. For others, it remains a near-term capability rather than a current one.

How Does Astra Voice 2.0 Handle Brand Voice Across Languages?

Astra supports voice customization across all 30 supported languages, with accent and variant tuning per market.

For businesses that need a consistent synthetic persona across languages, Astra’s voice configuration allows tone, pacing, and register to be set globally and applied uniformly.

Your Arabic-speaking and Spanish-speaking customers get the same brand experience, even though the voice adapts to each language.

For more on Astra’s voice cloning capabilities and how to configure a branded voice for your deployment, see our AI voice cloning guide.

Multilingual Voice AI: One Agent, Every Language, One Deployment

Real-time detection, mid-conversation switching, accent handling, and voice cloning across 30+ languages are now capabilities of a single deployable agent.

The barrier to serving a customer in their language has dropped from an operational decision to a configuration one.

Astra supports 30+ languages with automatic detection, real-time switching, and voice cloning, deployable on WhatsApp and your website without an engineering team. Start your free trial now.

Frequently Asked Questions

Yes. A multilingual voice AI detects language automatically from the first few words of a conversation and responds accordingly. No language menu, pre-selection, or separate bot per language required.

The agent falls back to a configured default language, typically English, and signals the handoff clearly so the customer isn’t left confused. The best platforms let you customise this fallback behaviour per market.

Yes, and it’s particularly valuable there. Serving patients and customers in their native language reduces miscommunication risk and improves the precision of conversations where getting the details right matters most.

Separate bots require separate builds, separate maintenance, and a routing layer to direct customers to the right one. Real-time switching happens within a single agent and a single conversation flow, so one deployment serves all languages simultaneously.

Yes. Astra detects language in both text and voice messages on WhatsApp and responds in the customer’s language without any manual routing. A single WhatsApp number can serve your entire global customer base.