Voice-First Web Experiences: Navigating with Voice Commands

Written by:

Shreya

|

Last updated on:

April 20, 2026

|

Fact Checked by :

Shreya

|

Last updated on:

April 20, 2026

|

Fact Checked by :

Namitha Sudhakar

|

According to: Editorial Policies

Namitha Sudhakar

|

According to: Editorial Policies

Too Long? Read This First

- Unlike simple voice-to-text buttons, voice-first sites treat the entire user journey as a dynamic, two-way conversation.

- Speaking is 3x faster than typing, making web navigation frictionless for multitaskers and users with disabilities.

- AI agents like Astra allow users to start a voice conversation on WhatsApp and pick up exactly where they left off on the website.

- These systems function through a "relay race" of speech recognition, intent decoding, backend action, and human-like text-to-speech.

- Industries like e-commerce and healthcare use voice to enable hands-free product discovery, appointment booking, and multilingual onboarding.

Website visitors want browsing convenience.

Whether it is comparing prices, looking for product reviews, or making a payment, they want fast action.

Imagine how impressed a potential customer will be if they could speak and get the results, instead of typing.

That is a voice-first web experience, an interface where users can say what they need to get things done.

How is this possible?

Voice-first web experiences are created by voice agents across websites or apps that interact with users. They are powered by smart technology (intelligence layers) that understand and respond to your voice.

This guide will unveil everything about voice-first experiences, how they work, and how they are being adopted today

Let’s get started.

Where Voice-First Experiences Are Actually Taking Off?

Most brands are adopting voice-first experiences on their websites, letting visitors speak instead of typing.

When a user clicks on a website, it generates a “Wake up” word, prompts the user to switch on the microphone, takes voice input, and completes a specific action.

Visitors can have real-time conversations, browse, and shop faster.

The voice-first experience is not limited to your website; it is also available on WhatsApp, where most of your customers are active and come with queries about your products or services.

So, the shift from typing to voice interactions has marked a significant change for brands. More and more brands are looking to embed voice AI agents to simplify their website experience across e-commerce, healthcare, automotive, and banking sectors.

For businesses, this is a clear opportunity. Instead of forcing users to adapt to voice on websites, they can meet them wherever they are. AI voice agents can work anywhere, on websites, messaging apps, or calls, to drive sales.

The Web Experience Shift: Voice-Enabled vs. Voice-First

With over 35% of households using smart speakers, voice isn’t a “future” tech; it’s a current habit. Users now expect to talk to their screens as naturally as they do to Alexa or Siri.

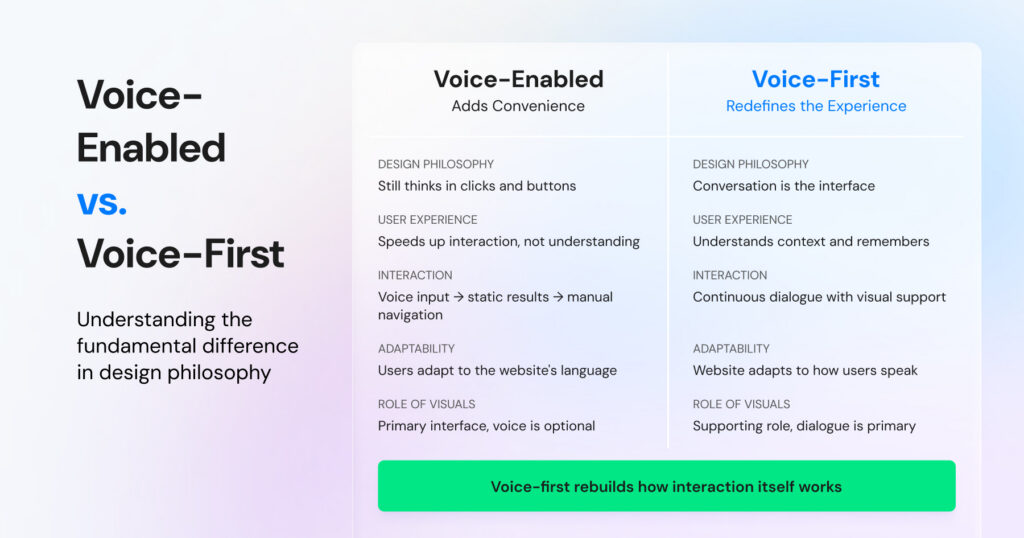

Now, businesses are shifting from simply adding a simple voice bot to a website to building powerful AI-powered conversational agents. It is different from the old way of voice-enabled customer interactions. Here is a quick note for your understanding.

- Voice-Enabled (The Old Way): Simply adds a microphone to a search bar. It saves a few keystrokes, but the user still has to navigate menus and filters once the results appear manually.

- Voice-First (The AI Agent Way): Reimagines the entire journey as a conversation. Instead of “searching,” the user is “talking” to the website, and the website responds by performing actions, asking clarifying questions, and guiding the user to a goal

Where Voice First Actually Works Today?

With a voice-first UI, you can naturally talk, understand, and empathize with customers.

When I say this, there are two versions of this experience: one is the standard machine-learning approach, which responds to customers in machine language, follows instructions, and works accordingly. Such as press 1, move to # type of instructions.

Second is more advanced, voice cloning technology. Here, instead of the machine language and generic AI sounds, the AI agent that you deployed talks in your voice.

Businesses can personalize their voice through this technology. You can clone your voice with the agent so customers feel like they’re talking directly to you, rather than a generic AI-generated voice and conversational experience.

What if I say that brands like Wati have an orchestration layer called Astra to power voice-first experiences? Astra Voice brings AI-powered voice agents to WhatsApp.

This allows businesses to interact with customers where they already are, helping answer questions, qualify leads, book appointments, and provide support through simple voice conversations.

Having human-centric conversations across platforms really creates a good impression of the brand.

The sales team engages on WhatsApp via voice interactions or AI-agentic support to handle bulk queries efficiently, automate conversations, and close deals with minimal human effort.

Yes, you have read that right. Learn more about how this works

From WhatsApp to Web: Continuous Voice Personalization

The most complicated use case today is the easy transition from a WhatsApp marketing workflow to a website.

Even if a lead clicks on a third-party web link via WhatsApp, their voice experience on the website doesn’t break. Or vice versa.

This is where we are going back to the Astra tool mentioned above. Astra, a voice agent tool, fuels WhatsApp conversations on its own. Astra adds an intelligence layer to conversations to help users find the information they are looking for.

If a lead starts a voice conversation with an AI agent on WhatsApp, which can adapt to your voice and speak to customers in natural language rather than AI language, that context will follow them everywhere.

When they click through to your website or web app, the web-based voice session picks up exactly where they left off. No repeating details; just a continuous, revenue-driving conversation.

Beyond Search: 4 Real-World Use Cases for Voice-Enabled Web Experiences

As mobile browsing dominates and users demand “frictionless” experiences, a voice UI web experience is becoming a competitive necessity.

From boosting e-commerce conversions to streamlining heavy-duty logistics, here are five ways businesses are actually using voice technology today.

1. Frictionless E-Commerce Product Discovery

Imagine a shopper on a furniture site. Instead of toggling five different dropdown filters, they simply say: “Show me grey sofas under ₹40,000.”

A voice-enabled search layer interprets intent, filters the catalog in real time, and serves results instantly. This zero-typing experience is convenient for mobile shoppers who are often multitasking.

2. Hands-Free Efficiency in Healthcare & Logistics

In environments where hands are occupied, such as clinics, warehouses, or delivery vans, typing is a liability. Here is how embedding a voice layer can help in such scenarios:

- Healthcare: Patients can check in at kiosks via voice, reducing physical contact and wait times.

- Logistics: Warehouse operatives can process shipments by speaking SKU numbers as they move boxes.

Also, learn how the WhatsApp API for healthcare improves patient care and automates health operations.

3. Intelligent FAQ & Customer Support Flows

If users have to scroll through an FAQ portal with 100 answers, they will get lost in a maze.

By embedding a voice-first agent, you can help customers navigate the FAQ section and answer their queries. Shifting customer support workflows to voice agents reduces ticket escalation, quickens response times, and improves customer satisfaction.

4. Hyper-Local Multilingual Website Onboarding

With voice UI, you can cater to multiple demographics where users speak specific local languages. Voice models are trained on large datasets, enabling better handling of accents and variations.

Even if users speak partially, have heavy accents, or speak with confusion, voice models can still guide you for onboarding.

For businesses in linguistically diverse markets like South Asia or the Middle East, offering a “voice-first” welcome in a native tongue is a massive brand differentiator.

Along with fueling agentic experiences on the website, brands are also integrating them with WhatsApp for an omnichannel experience.

5 Key Benefits of Voice-first Web Experiences in 2026

As most people move away from traditional sign-ups and embrace voice-first platforms, brands can build better relationships with people without any bias.

Voice-first web experience helps brands be inclusive, build next-gen UI, and give competitor-first benefit to their loyal visitors.

Some brands also offer multimodal functionality, allowing customers to press a button that triggers an online action. Let’s look at 4 such key benefits:

- Speed & Efficiency: Speaking is much faster than typing on a mobile. A voice-first interface allows users to complete complex tasks, like booking a consultation or tracking an order, in a single sentence.

- True Accessibility: It removes barriers for users with visual impairments, motor disabilities, or dyslexia, making your site inclusive by design rather than as an afterthought.

- Hands-free: Voice-first design captures users in “situational impairment” moments, when they are driving, cooking, or multitasking, expanding the windows of time they can interact with your brand.

- Reduced Cognitive Load: Users don’t need to learn your product catalog anywhere. They just say what they need, and a voice UI helps them navigate with ease.

- Multimodal functionality: With brands now offering immersive virtual experiences, users can run virtual meetings on a surface (say, a wall) with just a touch of a button, or dictate a command to browse a catalog or read a review side by side.

The Hurdles: 5 Real-World Challenges of Voice-First Design

Implementing voice isn’t as simple as toggling a switch. To build an interface that users actually trust, developers must navigate several significant roadblocks:

- Invisible Voice UI: Because voice UI is different from a graphical interface, users can’t check what’s happening in the backend. Users often suffer from “blank page syndrome,” not knowing where to go or what to say.

- Always Listening Anxiety: Trust is the primary currency of voice-powered websites. Users are often scared to grant microphone access, fearing that their data will be compromised.

- Environmental Interference: Background noise, like office chatter, wind, or traffic, alongside diverse regional accents, can cause traditional Speech-to-Text engines to fail.

- Latency: If a website takes 3+ seconds to process a spoken request, the user experience breaks. High latency makes the AI feel broken.

- Turn-taking: If a user has a complex doubt, the system should give a visual cue, such as “uh, I need to check that,” to keep users engaged. Simple buffering may disengage the user.

The Road Ahead for Voice-First Web Experiences

With a voice-first website, you can prioritize user convenience and comfort, and put an end to the back-and-forth of traditional websites!

Voice captures something a static website can never convey: an emotional sentiment that makes a customer feel heard.

Platforms like Astra help centralize customer resolution, break down user intent, and set an instant tone, which provides a standout customer experience!

The key is not to assume what users are talking about, but to give time, take pauses, and make them feel as if they are part of a real conversation, led only by an AI agent.

Learn about everything that Astra has to offer to fuel sales growth. Book a demo today!

Frequently Asked Questions: Voice-First Web Experiences

Have more questions? Refer Below!

A voice-enabled site simply adds a voice-to-text button (like a search bar). A voice-first site, powered by agents like Astra, treats the entire visit as a conversation, allowing users to navigate, ask complex questions, and complete tasks entirely through speech.

Yes. Modern voice AI is trained on billions of linguistic tokens, allowing it to understand heavy regional accents, “filler” words (like um or ah), and even partial sentences. Astra, for instance, can provide a hyper-local onboarding experience in a user’s native tongue.

Absolutely. Leading platforms use a “Consent-First” architecture, meaning the microphone is only active when you explicitly trigger it. Data is handled via strict security protocols to ensure your conversations remain private and secure.

It removes the inconvenience of browsing. Users with visual impairments, motor disabilities, or dyslexia can interact with the site naturally without relying on screen readers or complex menu navigation.

While custom builds can take months of development, a platform like Astra can be deployed in minutes. It integrates directly with your website to begin qualifying leads and answering queries without any coding required.